Introduction

In many data-driven systems, the most damaging events are not the frequent, predictable ones. They are the rare cases: a fraudulent transaction, a sudden sensor spike, an unexpected login pattern, or a manufacturing defect that appears only once in thousands of units. Anomaly detection focuses on identifying these unusual observations before they cause real impact. Among the most practical algorithms for this task is Isolation Forest, a method designed specifically to detect outliers efficiently, even when the dataset is large and complex. If you are learning applied machine learning in a data science course in Pune, Isolation Forest is worth understanding because it works well with limited labels and is often easier to operationalise than many density-based methods.

Why Anomaly Detection Is Challenging

Anomalies are difficult to model for two main reasons. First, they are rare, so you often do not have enough labelled examples to train a standard supervised classifier. Second, “normal” behaviour can vary across time, customer segments, or operating conditions. A transaction that is normal for one user could be suspicious for another.

Traditional approaches like z-scores or simple thresholds work only when the data is roughly well-behaved and the deviation is obvious. In real scenarios, anomalies may appear as subtle combinations of values across multiple features. Isolation Forest addresses these challenges by taking a different approach: instead of trying to learn what normal behaviour looks like in every detail, it directly tries to isolate unusual points.

The Core Idea Behind Isolation Forest

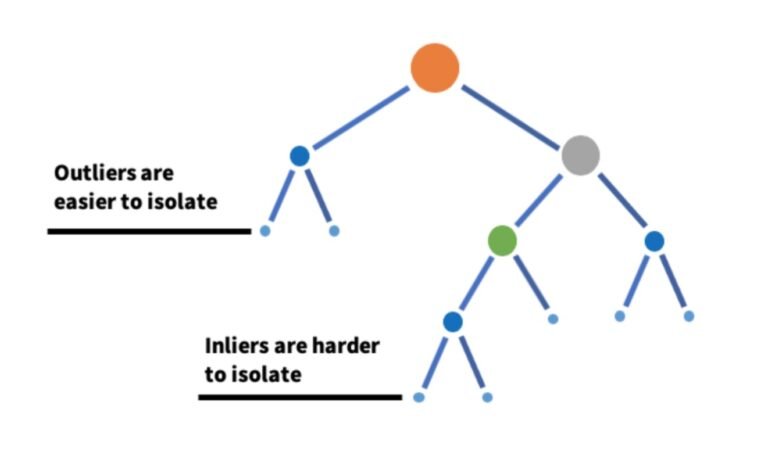

Isolation Forest is based on a simple observation: outliers are easier to separate from the rest of the data than typical points. Imagine repeatedly splitting a dataset by picking a feature at random and choosing a random split value. A point that lies in a sparse, unusual region will get separated quickly with fewer splits. A point in a dense region will require more splits before it ends up alone.

The algorithm builds many such random trees, called isolation trees (iTrees). Each tree is created by:

- randomly selecting a feature,

- randomly selecting a split value within that feature’s observed range,

- repeating until points are isolated or a maximum depth is reached.

For every data point, the model measures the path length—how many splits it takes to isolate that point in a tree. The average path length across many trees becomes the anomaly signal:

- Shorter average path length → more likely to be an anomaly

- Longer average path length → more likely to be normal

This logic is why Isolation Forest scales well. It avoids computing distances between all pairs of points, which can become expensive in high dimensions.

How the Anomaly Score Works

Isolation Forest converts the average path length into an anomaly score. You do not need the full formula to use it effectively, but conceptually it normalises path lengths so scores fall into a consistent range. A higher score indicates a stronger likelihood of anomaly.

Two practical implications matter here:

- Isolation Forest can be trained on mostly normal data without labels.

- The decision boundary depends on how many anomalies you expect. In practice, you set a contamination parameter (expected proportion of outliers) or choose a threshold based on validation and business tolerance.

These choices often come up in a data scientist course, because model performance is not only about algorithm selection. It is also about setting the right operating point for false positives versus false negatives.

Where Isolation Forest Performs Well

Isolation Forest is widely used because it works across many industries and data types. Common applications include:

- Fraud and risk monitoring: detecting unusual transaction amounts, frequencies, or device patterns

- Cybersecurity: spotting abnormal login times, access paths, or traffic bursts

- Manufacturing quality: identifying defective output patterns using process measurements

- IT operations: detecting anomalies in CPU usage, latency, or error rates

- Healthcare analytics: flagging unusual readings or patient event patterns (with proper clinical oversight)

It performs especially well when anomalies are “few and different” and the dataset has enough normal examples to represent typical behaviour.

Practical Implementation Considerations

Even a strong algorithm can fail if the input data is not prepared properly. When implementing Isolation Forest, pay attention to:

Feature scaling and encoding:

Tree-based methods are less sensitive to scaling than distance-based methods, but you still need consistent representations. Categorical variables should be encoded carefully (one-hot encoding is common).

Contamination and threshold selection:

If you set contamination too high, you will label too many points as anomalies. If too low, you may miss important events. Start with a reasonable estimate and refine it based on outcomes.

Interpretability:

Isolation Forest provides an anomaly score, but explaining “why” can require additional work. A practical approach is to compare an anomalous point to typical values, examine feature contributions through local methods, or use rule-based summaries for investigation teams.

Concept drift:

Normal behaviour can change over time. For long-running systems, retraining schedules and monitoring are important, even for unsupervised models.

These implementation details are the difference between a model that looks good in a notebook and one that works reliably in production.

Conclusion

Isolation Forest is a practical, efficient algorithm for anomaly detection because it isolates outliers using random feature selection and random split values. Points that are easier to separate tend to be anomalous, and this simple idea scales well to large datasets. With thoughtful feature preparation, threshold setting, and monitoring, Isolation Forest can support real-world detection tasks in fraud, operations, cybersecurity, and quality control. For learners building applied skills through a data science course in Pune or advancing hands-on modelling through a data scientist course, this algorithm is a valuable addition to your toolkit because it balances simplicity, scalability, and strong performance in many common anomaly scenarios.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com